The Short Answer

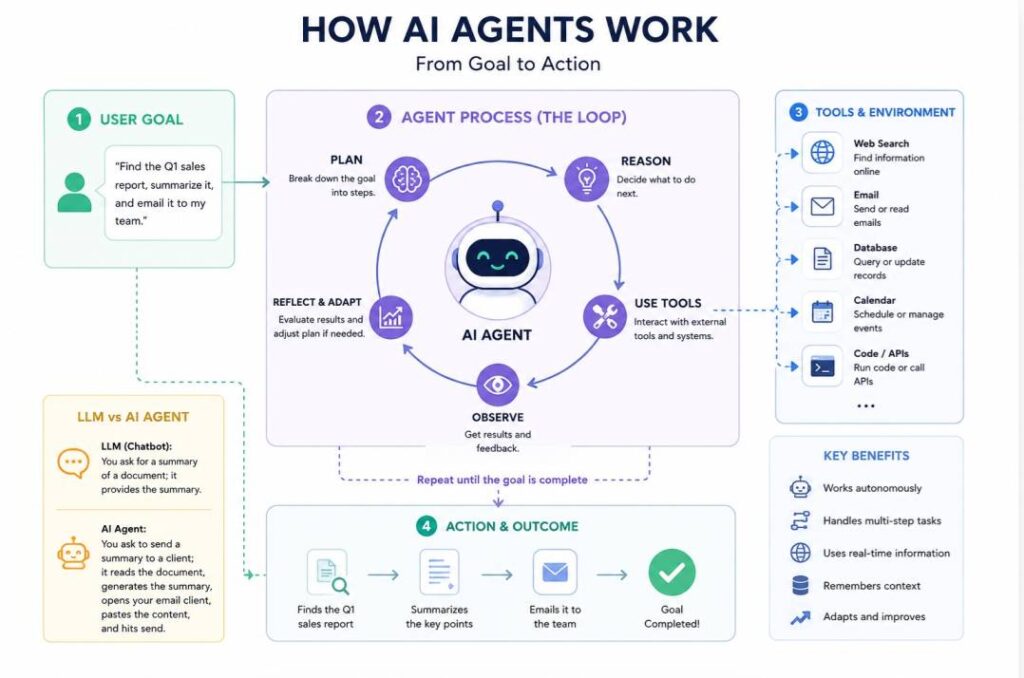

An AI agent is a software system that can autonomously pursue a goal across multiple steps and multiple tools without needing a human to approve each move.

You give it an objective, it makes a plan, takes action, checks the result, and adjusts until the task is done. That is fundamentally different from a chatbot, which waits for your next prompt, or a copilot, which helps you work inside a single application.

Unlike traditional chatbots that only produce text responses, agents can carry out actions such as updating systems, sending emails, or navigating the web to complete multi-step tasks with limited human input.

What Is an AI Agent? (Full Definition)

AI agents are autonomous systems that perceive their environment, reason about what needs to happen, and take real-world actions — things like running code, querying databases, sending emails, calling APIs, or coordinating with other agents — without requiring human approval at every step.

The simplest way to think about it: a chatbot answers a question, an agent completes a task.

The core loop that most agents run on is: perceive, plan, act, observe, repeat. If an action fails or produces the wrong result, the agent notices and tries a different approach. This is what makes them genuinely useful for complex, multi-step work.

Key characteristics of AI agents:

- Autonomy: operate independently toward goals without step-by-step prompts

- Goal-oriented planning: break complex tasks into smaller steps

- Tool usage: interact with APIs, browsers, and external systems

- Memory and context: retain information across tasks for better outcomes

Difference between AI agents and LLMs:

- LLM: provides reasoning and text responses only

- AI agent: uses an LLM plus tools to take real-world actions

- Example: LLM summarises a document; agent summarises it and emails it

Core components:

- Brain: large language model for reasoning and decision-making

- Memory: stores past interactions and context

- Tools: connects to external software and systems

- Planning: structures tasks into executable steps and adapts when needed

Common use cases:

- Autonomous digital assistants for scheduling and email management

- Customer support systems that query databases and resolve issues

- Research tools that gather and synthesise web information

- Software agents that write, debug, and deploy code

AI Agent vs Chatbot vs Copilot: What Is the Actual Difference?

This is one of the most searched questions around agentic AI, and a lot of vendors muddy the water by calling copilots “agents.” Here is the honest breakdown:

- Chatbot: Answers questions in a single-turn, stateless interaction. No memory of previous steps, no ability to act in external systems. Early AI features in most software were chatbot-tier.

- Copilot: Embedded inside one application and helps you work faster inside that app. Useful, but it cannot reach outside the tool it lives in. Microsoft Copilot in Word is a good example.

- AI Agent: Given a goal, pursues it autonomously across multiple systems and steps. It can open a ticket, pull data from a database, send a notification, and write a report — all as one connected workflow, without being prompted for each action.

The practical test, as one accounting-focused AI firm puts it: if you removed the AI and the software still worked (just slower), it was a copilot. If the entire workflow collapses without it, it is an agent.

How Do AI Agents Work?

Every production-grade agent is built on five components:

1. Perception The agent takes in input from its environment. This could be a user instruction, an API response, a file, sensor data, or output from another agent.

2. Reasoning A large language model (or a smaller specialised model) breaks the goal down into steps, decides which tools to use, and determines what order to execute them in.

3. Memory Agents use two types of memory. Episodic memory tracks what just happened in the current task (like a running log passed into the model’s context window).

Semantic memory is broader factual knowledge, often retrieved from a vector database using retrieval-augmented generation (RAG) so the agent pulls the right information at the right moment.

4. Tool Use Agents take actions by calling tools: web search, code execution, file systems, databases, external APIs, or other agents. The Model Context Protocol (MCP), an open standard originally introduced by Anthropic, has become the dominant way agents connect to external tools.

By early 2026, more than 10,000 public MCP servers were in deployment, giving agents a standardised interface rather than requiring custom integration work for every connection.

5. Action and Feedback The agent acts, observes the result, and decides what to do next. Unlike traditional software, when something goes wrong it does not throw an error and stop. It reasons about the failure and tries again.

Multi-Agent Systems: When One Agent Is Not Enough

Many enterprise workflows in 2026 run not on a single agent but on coordinated networks of agents, each specialised for a different part of a task.

One agent handles document intake, another does classification, a third triggers downstream systems, and an orchestrator manages the sequence.

Databricks reported that multi-agent systems among their enterprise customers grew 327% in under four months, a figure that tells you how quickly adoption has moved from single assistants to distributed agent networks.

The analogy that sticks: think of it as a digital assembly line rather than a digital assistant. Each agent is a domain specialist, and the orchestrator keeps the line moving.

What Can AI Agents Actually Do? Real Use Cases in 2026

- Customer service: Rather than waiting for a complaint, a logistics agent can detect that a delivery van has broken down, automatically reschedule the delivery, apply a service credit, and notify the customer by text — before the customer even knows there is a problem.

- Software engineering: Tools like Devin by Cognition handle multi-file coding tasks, run tests, fix failing tests, and iterate autonomously. Garry Tan, CEO of Y Combinator, demonstrated publicly that agents allowed him to rebuild a project that once required $10 million and ten people.

- Finance and accounting: AI agents now handle document intake, expense categorisation, accounts payable workflows, and client communication routing. Karbon’s 2026 data shows firms using agents save around 60 minutes per person per day. Wolters Kluwer found firms training on AI save up to seven weeks per employee per year.

- Network management: In telecoms, agents can detect a network anomaly, open a field service ticket, and alert the customer as one integrated, automatic sequence.

- Enterprise IT: Agents monitor production systems for semantic failures (where an agent returns a plausible but wrong answer with no error code thrown) — a problem that standard application monitoring has no concept of.

Where Is Agentic AI Right Now? The Adoption Gap

The honest picture is that adoption is moving fast but deployment is still ahead of most organisations.

Gartner found that 75% of enterprises are experimenting with AI agents, but only 15% have deployed fully autonomous, goal-driven systems in production.

Deloitte’s 2026 Tech Trends report put just 11% of organisations with agents in genuine production despite 38% running pilots. The gap between pilot and production is the defining challenge.

The organisations closing it share a few traits: they target a specific, measurable business problem rather than experimenting broadly; they prioritise governance and data quality over model selection; and they treat change management as a continuous process, not a one-time launch.

The Role of MCP: Why It Matters for How Agents Connect

Before the Model Context Protocol existed, connecting an AI agent to a tool meant writing custom integration code for every combination of model and tool. Change one tool’s API and every integration depending on it had to be manually updated.

MCP standardises that interface, the way USB-C standardised physical connectors. Any application that supports MCP can talk to any MCP-compatible tool without bespoke glue code.

By 2026, most major AI frameworks and enterprise tools offer native MCP compatibility, and the protocol has been donated to the Agentic AI Foundation as open infrastructure.

This matters because it is the reason multi-agent collaboration across vendor boundaries became practical in 2026, rather than a research project.

What Comes Next: The Trends Shaping Agents Through 2026

- Context engineering is replacing prompt engineering. The conversation has shifted from “how do you phrase the question?” to “what data, memory, and context does the agent have access to?” A well-governed agent with the right context reliably outperforms a sophisticated model with poor data access.

- Smaller models will do more of the work. IBM’s research team notes the industry is hitting diminishing returns from scaling large frontier models. The emerging pattern is routing: smaller, faster, cheaper models handle most tasks and pass off to larger models only when the complexity genuinely requires it. Google Cloud describes this as cooperative model routing.

- Physical AI is accelerating. Amazon deployed its millionth robot, with its DeepFleet AI coordinating the entire warehouse robot fleet. BMW has cars driving themselves through kilometre-long production routes. Robotics and physical AI, where agents sense, act, and learn in real environments, is where many researchers see the next major frontier.

- Security is becoming a first-class concern. Connecting agents to thousands of external MCP servers introduces real attack vectors: tool poisoning, where a malicious server injects instructions to manipulate agent behaviour. Governance frameworks that define which servers an agent can reach, with full audit trails, are now a requirement rather than an afterthought.

Key Terms to Know

- Agentic AI: Any AI system capable of autonomous, multi-step action toward a goal.

- MCP (Model Context Protocol): An open standard that lets AI agents connect to external tools and data sources through a shared interface, without custom integration work.

- Multi-agent system: A network of specialised AI agents that collaborate on a task, each handling a different part of the workflow.

- Context engineering: Designing the information architecture around an agent (what data it can see, what knowledge bases it has access to, how memory is structured) rather than just optimising prompts.

- RAG (Retrieval-Augmented Generation): A technique where an agent retrieves relevant documents from a knowledge base at query time, rather than relying solely on what the underlying model was trained on.

- Headless AI: When agents do the work programmatically, the question is no longer “where do I find this in the UI?” but “can the agent reach it programmatically?” Headless AI refers to systems where the agent interface is the product, not a dashboard.

Final Summary

AI agents are the most consequential shift in how software works since the smartphone. They are moving from experimental to essential, and the organisations getting real value from them are the ones treating agent deployment as an infrastructure and governance problem, not a model selection problem.

The question for 2026 is not whether AI agents will matter to your business. It is how quickly you can move from pilot to production.