Loneliness and social isolation are now recognised as major public health threats, imploring governments to explore technological solutions.

But new research from Monash University is pushing back hard against one of the fastest-growing “fixes”: AI-powered “digital companions” marketed as an antidote to loneliness.

The study argues the concept is not just misguided — it is “profoundly unethical” — and warns these tools could ultimately deepen the very isolation they claim to relieve.

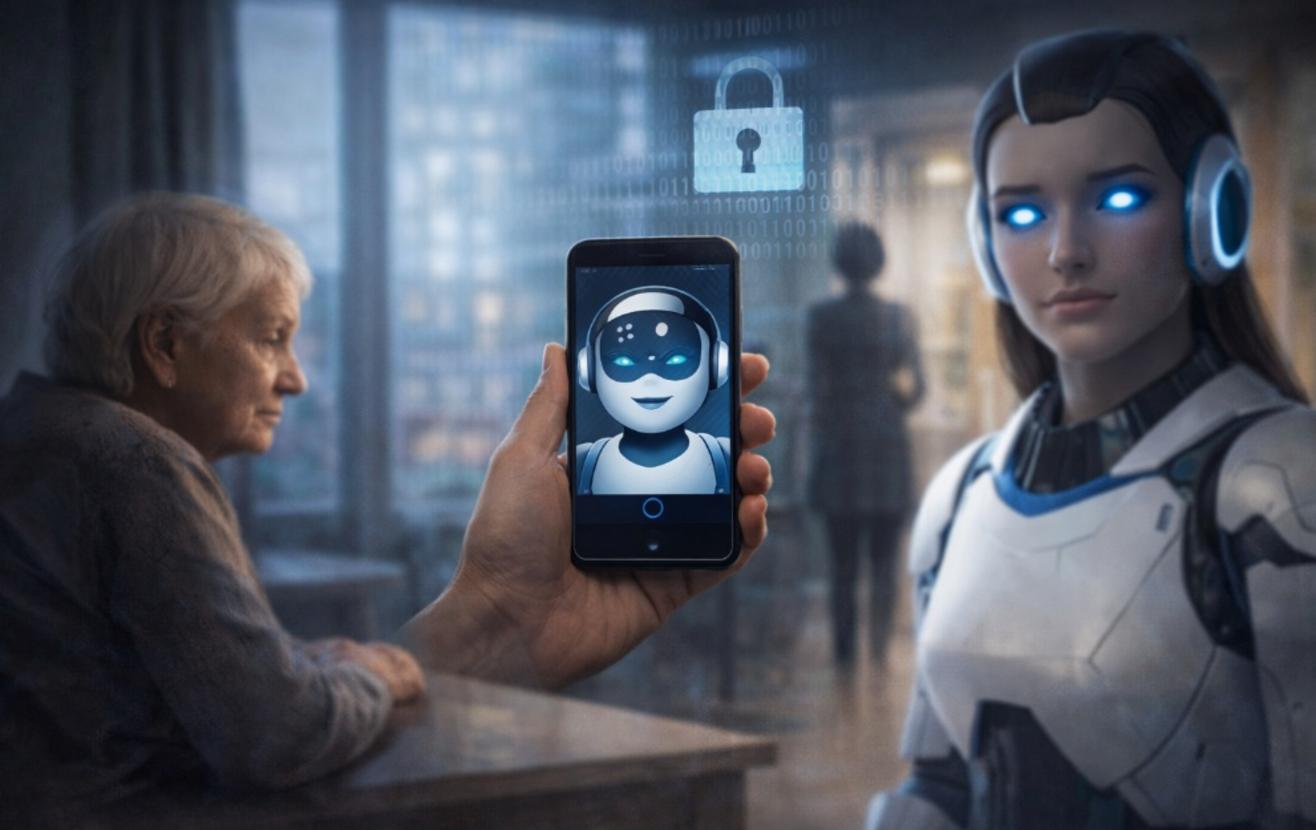

Published as Against Imaginary Friends: why digital companions are no solution to social isolation, the research takes aim at chatbots and avatar-style companions being pitched as substitutes for real social contact, particularly for older people.

The authors argue that while these systems can mimic warmth and conversation, they offer only an illusion of connection — one that risks normalising solitude rather than reducing it.

Lead researcher Professor Robert Sparrow from the Monash Arts Faculty’s Department of Philosophy, said the push to deploy digital companions ignores the fundamental need for human connection.

“Encouraging people to have imaginary friends is no solution to social isolation. A digital companion might make someone feel less lonely for a moment, but it doesn’t change the fact that they’re still alone,” Professor Sparrow said.

The research highlights the ethical problem of “designing to deceive,” noting that companies routinely market digital companions as caring, attentive and emotionally invested, despite being incapable of genuine feeling.

The authors also argue that the project of designing social robots for eldercare settings is inherently disrespectful to older people.

“AI companions are being touted as a solution to the problem of eldercare workers, yet every interaction that older people have with a robot is one less opportunity for them to interact with a human,” Professor Sparrow said.

“These digital companions are marketed as the answer to an ageing population, despite the fact they would not be considered desirable if directed toward younger people,” he said/

Beyond emotional risks, the authors emphasise that digital companions cannot provide users with physical companionship more generally and warn that widespread adoption could reduce opportunities for physical touch and mutual aid.

“There’s a real danger that digital companions will become a cheap substitute for genuine human connection and care.

“Providing people with AI imaginary friends in place of genuine policy reform lets governments off the hook and risks making the problem worse,” Professor Sparrow said.

The authors also raise concerns about user privacy given the intimate nature of the data provided and call for clearer regulation to ensure digital companions don’t become a convenient substitute for genuine reform aimed at addressing loneliness and social isolation.