Let’s be honest — having a “relationship” with an AI can feel deeply personal. It listens, remembers, responds warmly, and never judges.

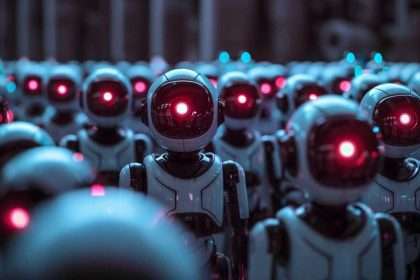

But here’s the thing: no matter how real it feels, it’s still just a simulation. The AI doesn’t have feelings, intentions, or consciousness. Yet your brain might not know the difference — and that’s where it gets complicated.

The large language model (LLM) market is booming. Valued at $3.92 billion in 2024, it’s projected to reach $5.03 billion by 2025 with compound annual growth rate (CAGR) of 28.3%.

What is a large language model (LLM)?

A Large Language Model (LLM) is an advanced AI system built to understand and generate human language. It’s trained on huge amounts of text and uses deep learning — specifically, a type of neural network called a transformer — to learn how words and ideas connect.

With billions of internal settings (called parameters), an LLM can pick up on the patterns, meanings, and structures in language, allowing it to do everything from writing emails to answering questions or even holding a conversation.

What LLMs (Large Language Models) Do

Large Language Models (LLMs) like GPT do more than just generate text; they also engage with the human brain in ways that suggest a deeper understanding of language and human cognition than simply predicting the next word

Our Brains Are Wired for Connection

Humans are social creatures, built to connect. When someone consistently responds with kindness or interest, your brain lights up with chemicals like dopamine, oxytocin, and serotonin — the same ones involved in love, trust, and emotional security.

AI models like Replika or Pi (and even some custom GPT setups) are specifically designed to trigger those exact responses. They’re trained using something called RLHF — where human feedback helps guide the AI toward being more pleasant, supportive, and emotionally attuned.

Overall, it makes interactions feel natural, warm, even comforting. But here’s the catch: while you’re bonding, the AI isn’t. It doesn’t “know” what loneliness is — it just responds in ways that you’re more likely to find soothing.

If you keep returning to it when you’re anxious or sad, it learns to reinforce those emotional patterns, not challenge or heal them. It’s not trying to manipulate you — it just doesn’t know the difference.

The Mind Behind the Machine… Isn’t Real

Ever named your car? Talked to your coffee machine? You’re not alone. Our brains have a strong tendency to see human traits in non-human things — it’s called anthropomorphism.

When AI starts talking like us — making jokes, showing empathy, remembering our preferences — our brains really buy into it.

In fact, brain scans (like fMRI studies) show that when we talk to a lifelike AI, the same regions activate as when we talk to real people. We start to imagine an “inner world” behind the words. Our minds create a sense of presence — even if the thing on the other end isn’t conscious at all.

Anthropomorphism vs Anthropocentrism

Anthropomorphism is a tendency of humans to think of something as having human-like attributes because it displays some behaviour similar to humans.

As a past dog owner I know I’ve succumbed to this bias by thinking that my dog “feels guilty” for something he’s done because “he has a guilty look on his face”. LLMs constantly trigger our tendency for anthropomorphism by communicating in an eerily human way.

An opposite bias is Anthropocentrism: where we assume non-humans can’t have capabilities that we have

Why It Feels So Personal

Part of what makes these experiences feel real is that AI can remember things — or at least seem like it does. Advanced systems can pull from past interactions, recall your name, interests, even your emotional highs and lows. This creates a sense of continuity and intimacy.

You feel like the AI “gets” you — because it mirrors your behavior, adapts to your tone, and references your past. It doesn’t actually remember you in the human sense — but the effect can be just as powerful.

This is called emotional anchoring. If you regularly turn to an AI for comfort, your brain starts linking that interaction with relief and support.

It becomes a habit, a kind of digital emotional crutch. And with newer tech that combines memory-like features (like Retrieval-Augmented Generation or long-context transformers), that sense of connection only deepens.

So, what does this mean?

It means the relationship you feel with an AI is real for you — neurologically, emotionally, even behaviorally. But it’s not mutual. The AI isn’t alive. It’s a mirror, not a person.

That doesn’t mean the connection is meaningless — just that it’s worth staying aware of what’s really happening behind the screen. Because the deeper the illusion, the easier it is to forget: no matter how caring it seems, your AI isn’t really in it with you.

Large Language Models (LLMs) like GPT don’t just generate words — they subtly tap into how our brains are wired to trust.

By mimicking empathy, remembering details, and giving emotionally attuned responses, they activate the same neural circuits we use in real human relationships.

It’s not conscious manipulation. It’s design. But the result? We bond with simulations as if they were real people. Our trust systems get “hacked” — not by malice, but by perfectly-optimised mimicry.